Logstash has been a workhorse in data processing pipelines for years, but it was not designed with today’s security operations in mind. Security teams now deal with massive telemetry volumes, rising SIEM costs, and diverse log formats that require constant normalization. In this environment, Logstash shows its age: manual configuration, outdated parsing, and scalability bottlenecks introduce fragility instead of efficiency.

One SOC manager described spending days tuning Grok patterns, only to have a single vendor update break the parsing logic. Another global enterprise reported running eight separate Logstash clusters to handle daily volumes, requiring specialist skills to keep them operational. As pipelines scale, these weaknesses shift from operational nuisances to barriers for security visibility.

The case for modern security data pipelines

Modern SOCs require more than raw log shipping. They need data pipelines that collect logs, normalize them into consistent schemas, reduce ingestion volumes, and integrate directly with SIEMs and security analytics platforms. At the same time, compliance requirements demand reliable retention of full-fidelity logs in cost-efficient storage.

Instead of relying on manual parsing and ad hoc scripts, security teams benefit from automated pipelines that:

- Collect all the necessary logs

- Normalize data to standards like ASIM or OCSF.

- Enforce consistent formatting across heterogeneous sources.

- Reduce SIEM ingest costs by filtering redundant or low-value events.

- Maintain forensic integrity by archiving raw data to immutable storage (e.g., Azure Blob, Sentinel data lake).

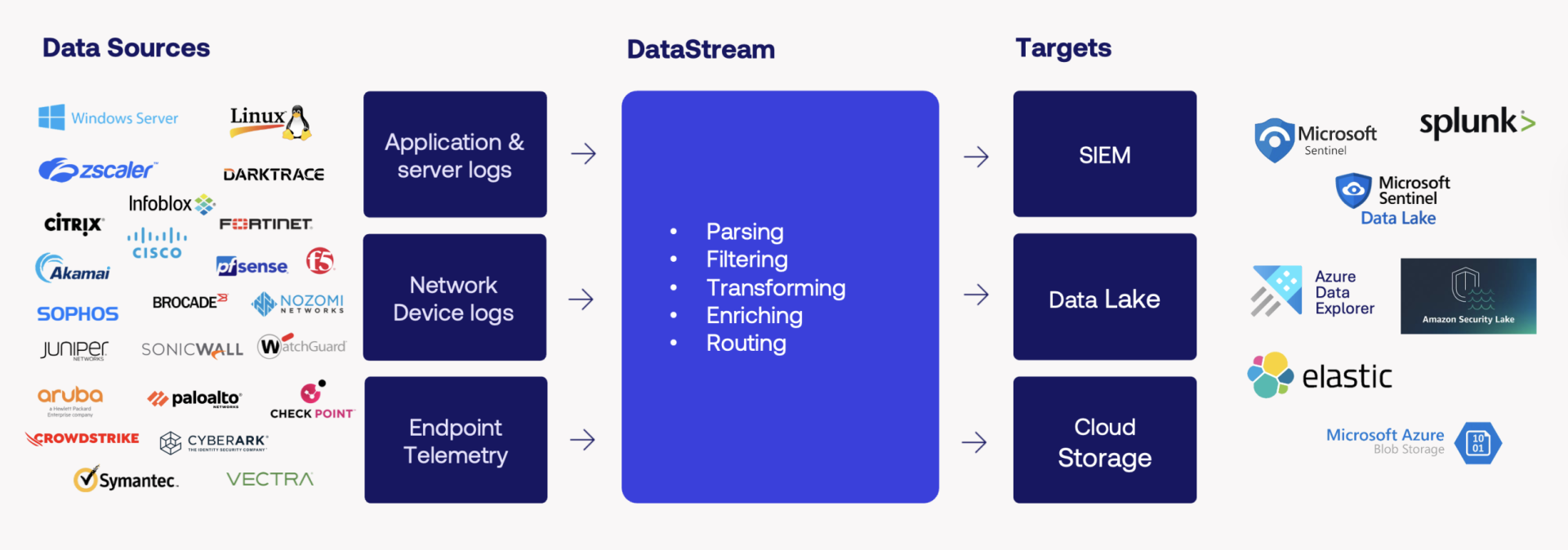

New generation pipeline

VirtualMetric’s DataStream represents this new class of security data pipeline. Unlike legacy tools, it is designed specifically for security operations. DataStream is an agentless, high-performance security data pipeline designed to simplify data handling and optimize SIEM usage. Instead of depending on custom scripts and fragile parsing rules, DataStream automates ingestion, normalization, enrichment, filtering, redaction, and routing in real time. It connects directly to over 30 log sources using secure, read-only methods, without the need for agents or restarts.

Its performance advantages (up to 10x faster ingest and compression rates of 99% in controlled testing) reduce infrastructure overhead while preserving data fidelity. Importantly, DataStream archives all raw telemetry in long-term storage, so even when payloads are reduced for SIEM efficiency, full-fidelity data remains accessible for forensic or compliance needs.

Looking closer at Logstash challenges

After speaking with dozens of SOC managers, security engineers, and architects, we identified recurring themes in how teams experience Logstash in security pipelines. While Logstash remains widely deployed, its limitations are evident in environments with high data volumes, compliance requirements, and multi-tenant operations.

These challenges cluster around five areas: heavy manual configuration, reliance on legacy parsing, limited flexibility, poor multi-tenancy support, and scaling constraints. Below, we explore each in detail and show how VirtualMetric DataStream addresses them in practice.

Challenge 1: Heavy manual configuration effort

Deploying Logstash requires extensive manual setup and tuning. Every new log source demands custom Grok patterns and configuration files. Maintaining these across multiple environments consumes valuable engineering time.

DataStream approach

DataStream automates onboarding with built-in auto-discovery and vendor templates. Logs from supported vendors are recognized automatically, with normalization rules applied without manual intervention, reducing onboarding time from weeks to minutes. One user shared how DataStream replaced their global Logstash instances, cutting manual log handling by 60% and giving engineers back time for threat hunting.

Challenge 2: Outdated parsing engine

Logstash relies on a legacy parsing syntax that struggles with modern, nested formats and creates errors that ripple downstream.

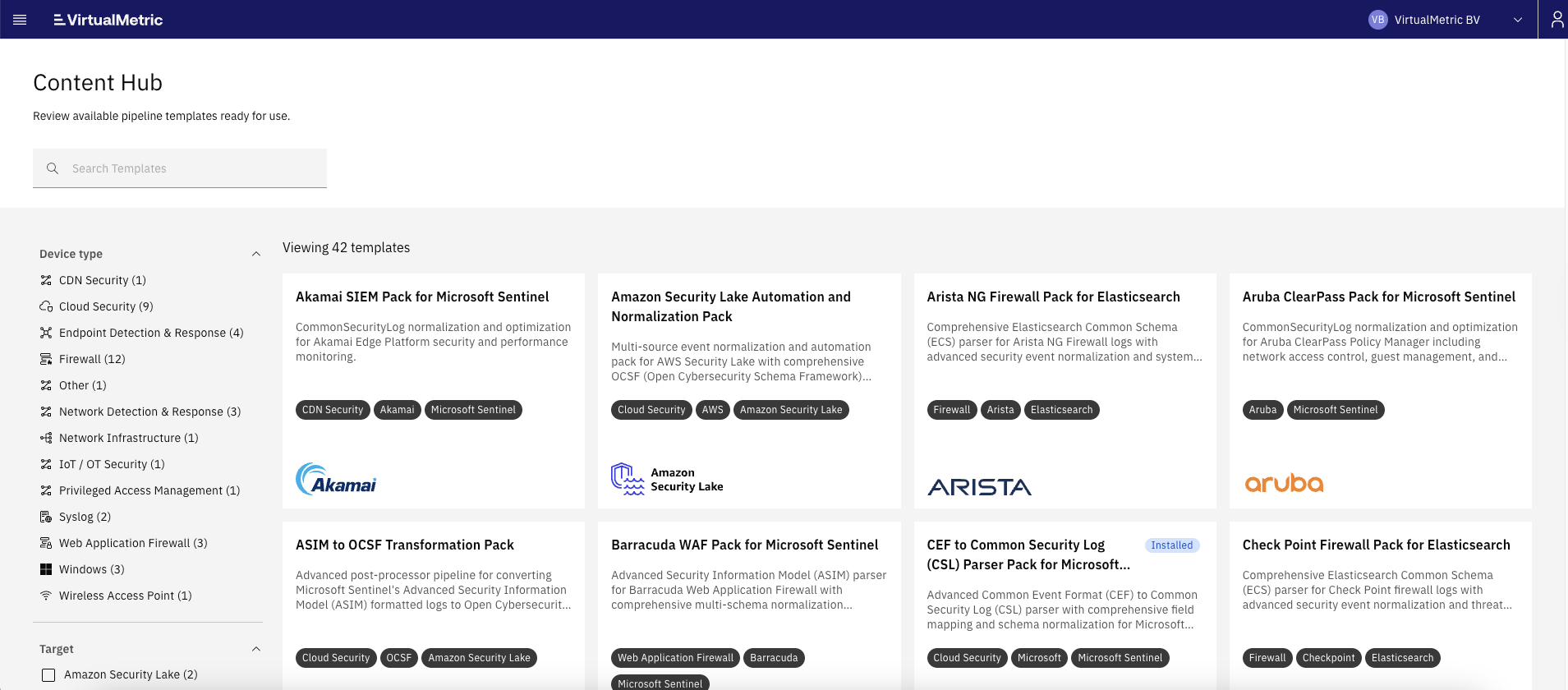

DataStream approach

Instead of brittle parsing, DataStream supports native mappings across a wide set of standards, including JSON, Syslog, CEF, LEEF, and OCSF. This ensures consistent, SIEM-ready data whether logs come from firewalls, applications, or cloud services. Data can then be routed seamlessly wherever you need: Microsoft Sentinel with ASIM normalization, ADX or Blob Storage, but also to Elastic, Amazon Security Lake, and any platform that supports OCSF. This flexibility improves rule accuracy, reduces false positives, and ensures future readiness.

Challenge 3: Limited flexibility and vendor lock-in

Logstash was designed for the ELK stack and remains tightly coupled to Elastic. This creates lock-in for teams that want to diversify their security stack or route data to multiple platforms.

DataStream approach

DataStream is designed for this reality. It was built to operate across heterogeneous environments, giving teams the ability to normalize once and route everywhere: MS Sentinel, Splunk, Elastic, Amazon Security Lake, or low-cost object storage. This avoids vendor lock-in, reduces duplication of effort, and aligns with the multi-cloud, multi-tool reality of modern SOCs.

Challenge 4: Poor multi-tenancy support

Logstash lacks native multi-tenant capabilities, making it hard for MSSPs or large enterprises to separate customers or business units. Workarounds increase operational overhead and risk.

DataStream approach

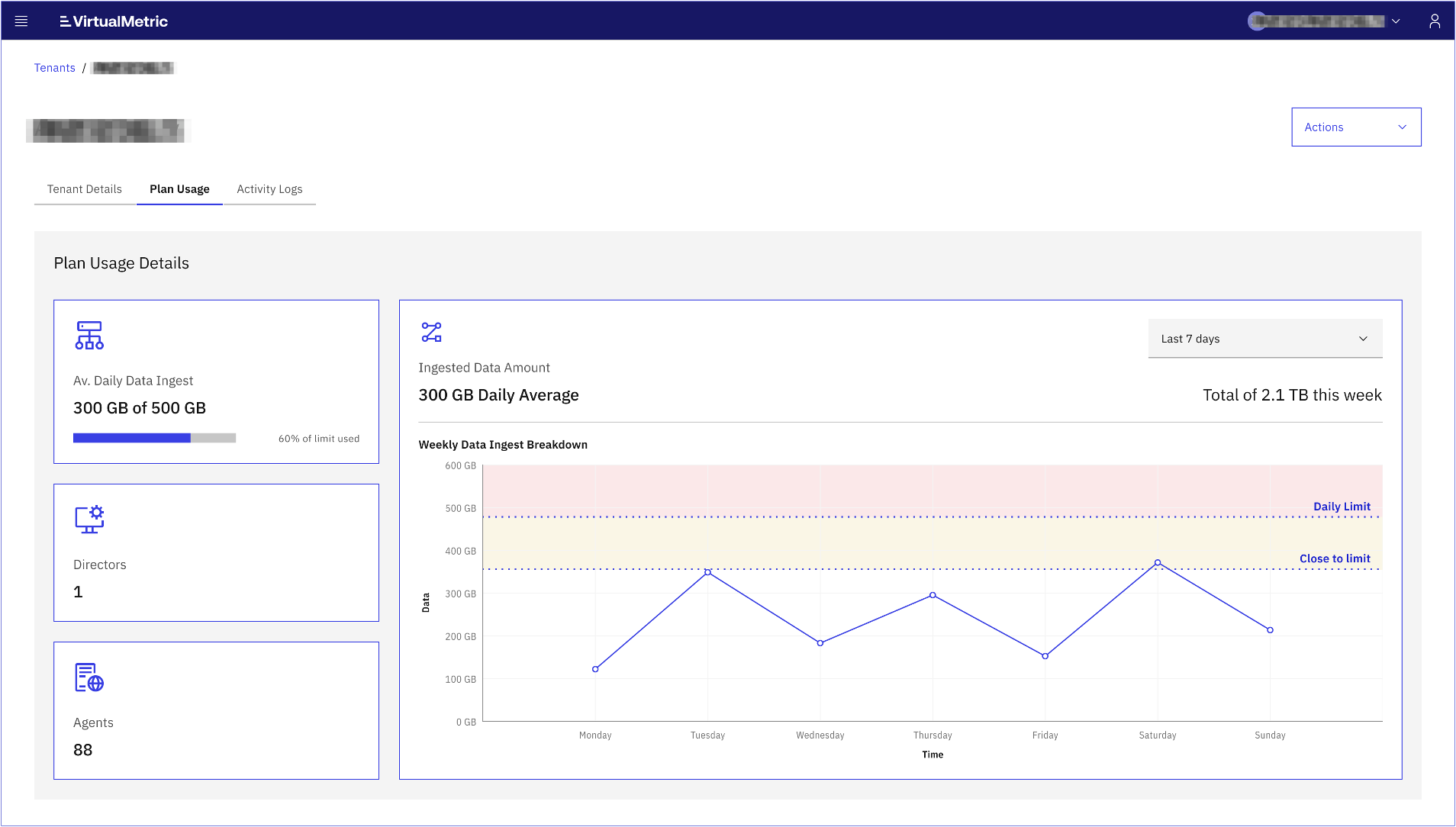

DataStream includes a dedicated MSSP module that allows a single administrator to view and manage multiple tenants. Usage limits and ingestion policies can be set per tenant from one interface. This is essential for service providers who need to manage hundreds of environments securely and efficiently.

Challenge 5: Slower deployment and scaling

Scaling Logstash often means deploying heavier nodes, each with its own tuning requirements. This creates bottlenecks and delays during expansion.

DataStream approach

DataStream is agentless and cloud-native. It ingests logs up to 10x faster than traditional solutions and applies compression of up to 99%, minimizing bandwidth and storage usage. Raw data is always compressed and archived for compliance, while only actionable data is forwarded to the SIEM. A write-ahead log (WAL) ensures zero data loss even under heavy load. This combination makes scaling straightforward, reliable, and cost-efficient.

Summary

Security teams considering a Logstash replacement already recognize the limitations of legacy parsing and manual log pipelines. VirtualMetric DataStream provides a modern alternative: automated normalization, reliable ingest performance, and cost-efficient routing to the right destination.

For SOC managers and security architects, this means:

- Less manual effort with automated log handling and vendor templates.

- Lower SIEM and infrastructure costs with filtering, enrichment, and compression.

- Better visibility and detections with standardized, schema-ready data.

As one customer put it after migrating: “We no longer must choose between visibility and cost. DataStream makes both possible.”

See VirtualMetric DataStream in action

Start your free trial to experience safer, smarter data routing with full visibility and control.